The Evolution of Voice Assistants from Commands to Conversations

Journey through the voice assistant timeline, from the 16-word IBM Shoebox to the sophisticated AI-driven interactions of today.

The first time I spoke to a device and it responded, I felt like I’d stepped into the future. Like many of you, I remember that mixture of surprise and delight, that sci-fi moment come to life. Fast forward to today, and we barely raise an eyebrow when asking our smartphones for directions or telling our speakers to play music.

This evolution of voice assistants, from clunky, command-driven interfaces to the natural conversations we now have with our devices, tells an incredible story of human ingenuity and persistence.

The History of The Voice Revolution: Teaching Machines to Listen

The evolution of voice assistants can be traced back to the 1950s and 60s at Bell Laboratories with IBM’s “Shoebox” and Bell Labs’ “Audrey”, which could recognize only a few digits and words. These early speech recognition systems required precise, slow speech and behaved more like rigid tools than true virtual assistants. Despite the limited vocabulary, these projects laid the groundwork for basic speech recognition.

The Pre-Modern Era: Building the Foundation

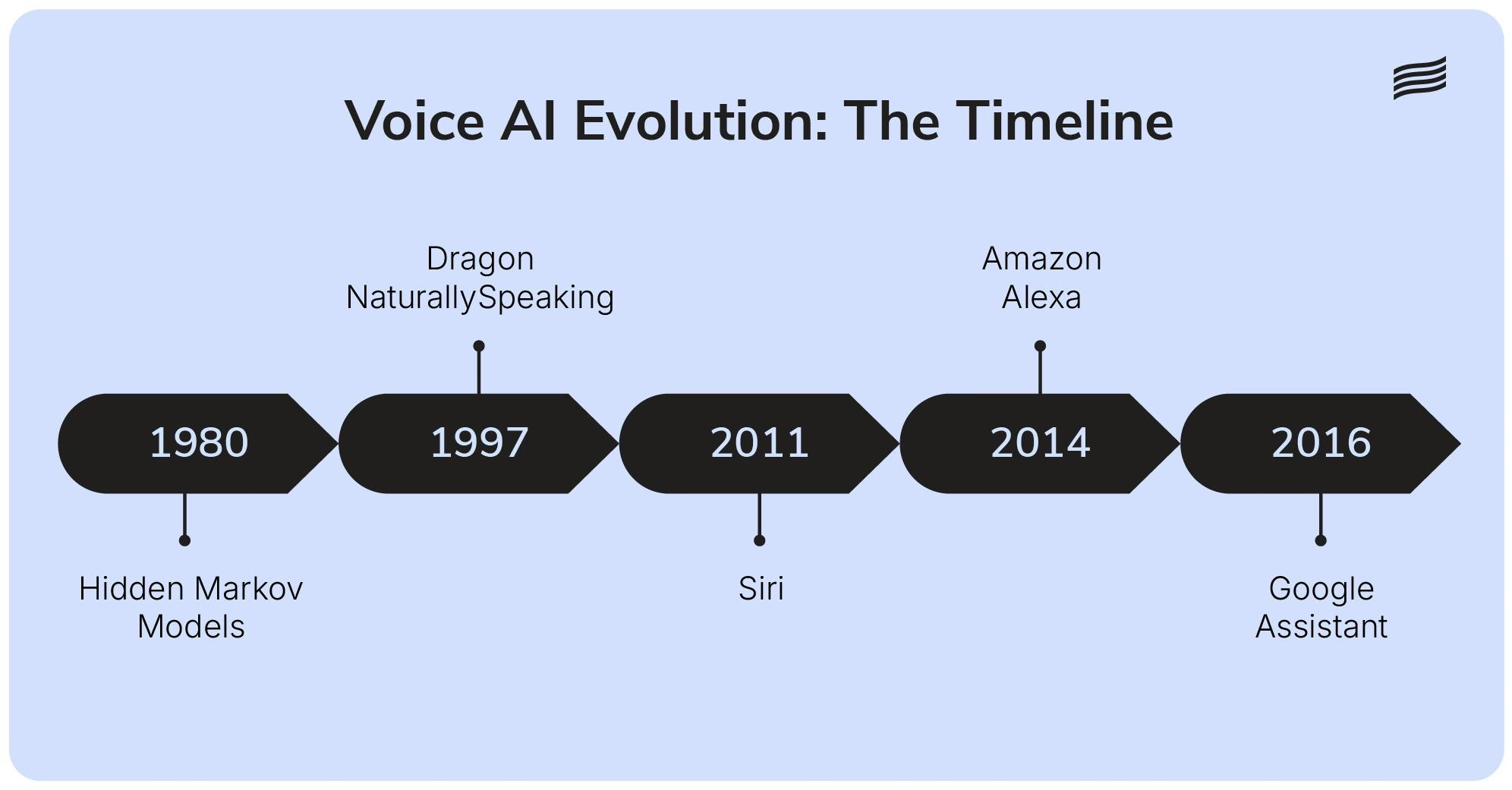

The 1970s and 80s brought breakthroughs in speech recognition technology with Hidden Markov Models, supported by research from DARPA and Carnegie Mellon. In the 90s, software like Dragon Dictate and later DragonNaturallySpeaking amazed users by allowing continuous speech transcription, even if it often got things hilariously wrong due to background noise.

Even though Microsoft’s Clippy wasn’t voice-activated, it highlighted an important lesson in the evolution of voice assistants: context and timing matter more than availability in building helpful assistants.

The Modern Revolution: Siri Changes Everything

A major shift came in 2011 when Apple introduced Siri, utilizing advanced algorithms to process human speech. For the first time in the history of the voice revolution, voice interfaces were in millions of pockets. Soon, Amazon Alexa (2014) and Google Assistant (2016) followed, integrating voice control into our homes and daily routines. These early assistants were limited but represented a leap in how we interacted with these machines through audio.

Beyond Commands: The Conversation Revolution

Natural Language Processing (NLP) and Natural Language Understanding transformed assistants from command receivers to conversational partners. Devices began to understand casual voice commands:

“Set a timer for 10 minutes”

became

“Hey, could you time the cookies for about 10 minutes?”

This marked a fundamental shift toward more human-like interactions and improved user experience.

The AI Explosion: Large Language Models

Since around 2018, artificial intelligence and large language models have enabled assistants to understand nuance: slang, idioms, context, and even culture. Deep learning and increased computational power in data centers pushed things even further. Models like OpenAI’s Whisper have shown significant progress in reducing the word error rate, tackling multilingual conversations and colloquial dialects with ease.

Where Are We With Voice Assistants Today?

Today’s assistants can:

- Maintain context across multiple interactions.

- Integrate with broader ecosystems.

- Understand and remember user preferences.

It’s not just about giving commands now; it's more about exchanges. Ask “What’s the weather like?” then “And this weekend?”, and you get the answer without repeating yourself.

What Does The Future For Voice Assistants Look Like?

Looking ahead, expect assistants to:

- Understand Emotion: Emotional intelligence will make responses more human and helpful.

- Offer Multimodal Interaction: Voice AI will integrate more deeply with visual and tactile input.

- Play Larger Roles: From healthcare to education, voice assistants will be woven into daily life.

By 2025, 75% of households in developed markets are expected to use voice-enabled devices.

Also read: Manual vs Automated Calls in Startups: The Real Cost

How Ringg AI Embodies the Future of AI Voice Agents

At Ringg AI, we are actively building the future of AI voice agents. We believe that technology should feel less like a tool and more like a helpful partner. That's why we've engineered our voice agents to handle over 10,000 concurrent calls without losing that critical human touch, ensuring that no customer is ever left on hold. We help patients book appointments, assist students with their enrollment, make it easier for customers to get answers to their queries, and much more. We're bridging the gap between artificial intelligence and genuine connection, proving that the future of voice isn't just about speaking. It's also about being heard.

To see exactly how modern voice AI agents help businesses automate more and scale faster, book a demo with us.

We don't pitch AI hype.

We deliver business outcomes.

Frequently Asked Questions

The history of the voice revolution began with 1950s systems like Audrey and Shoebox, which were limited to recognizing single digits and basic arithmetic. Over the decades, the field moved from simple pattern matching to complex neural networks that can process full sentences in real-time.

Related Articles

A Guide to Evaluating AI Voice Agents in 2026

Don't fall for the “Golden Demo.” Learn the 5 pillars for evaluating AI voice agents, from latency & ROI to agility & integrations.

AI Call Personalization: A Guide To Turning Bot Calls Into Human-Like AI Calls

Discover how human-like AI calls and personalized voice AI boost engagement. Learn to use AI call personalization to reduce hang-ups through humanized AI voice calls for surveys, leads, and collections.

Is Sierra AI Pricing Worth It In 2026? A Close Look at Cost vs Value

A 2026 pricing reality check: why Sierra’s opaque enterprise model can get expensive, and how Ringg AI lowers risk.